Teaching Robots to Follow Human Instructions

1. Introduction

Autonomous robots should be able to perform tasks with little to no human supervision. However there are several challenges that have to be solved before we can build self-operating robots like we discussed in this blog post (link to blog post 1).

Let’s imagine a scenario where a robot has to follow orders from a human to complete a certain task. In this scenario, it is necessary to conduct several tasks so that the robot can accomplish the order. First, the input command has to be understood by the robot by creating some kind of internal representation of the human request that can be translated into a specific task. Next, this task has to be decomposed in smaller subtasks or steps that have to be executed sequentially. For each of the steps, it may be required to interact with the world, i.e., move or perform dexterous movements (for instance, to grasp an object). Finally, once all the steps have been completed, the robot should verify whether the full task was successfully accomplished.

Despite the recent advances in AI, a single model can not deal with the whole process. It is necessary to integrate several, specialized, models that can solve each task separately. In the next section we will describe those models using a real example.

2. Demo. Pick and place using natural language, high level planning, and learning from human demonstrations

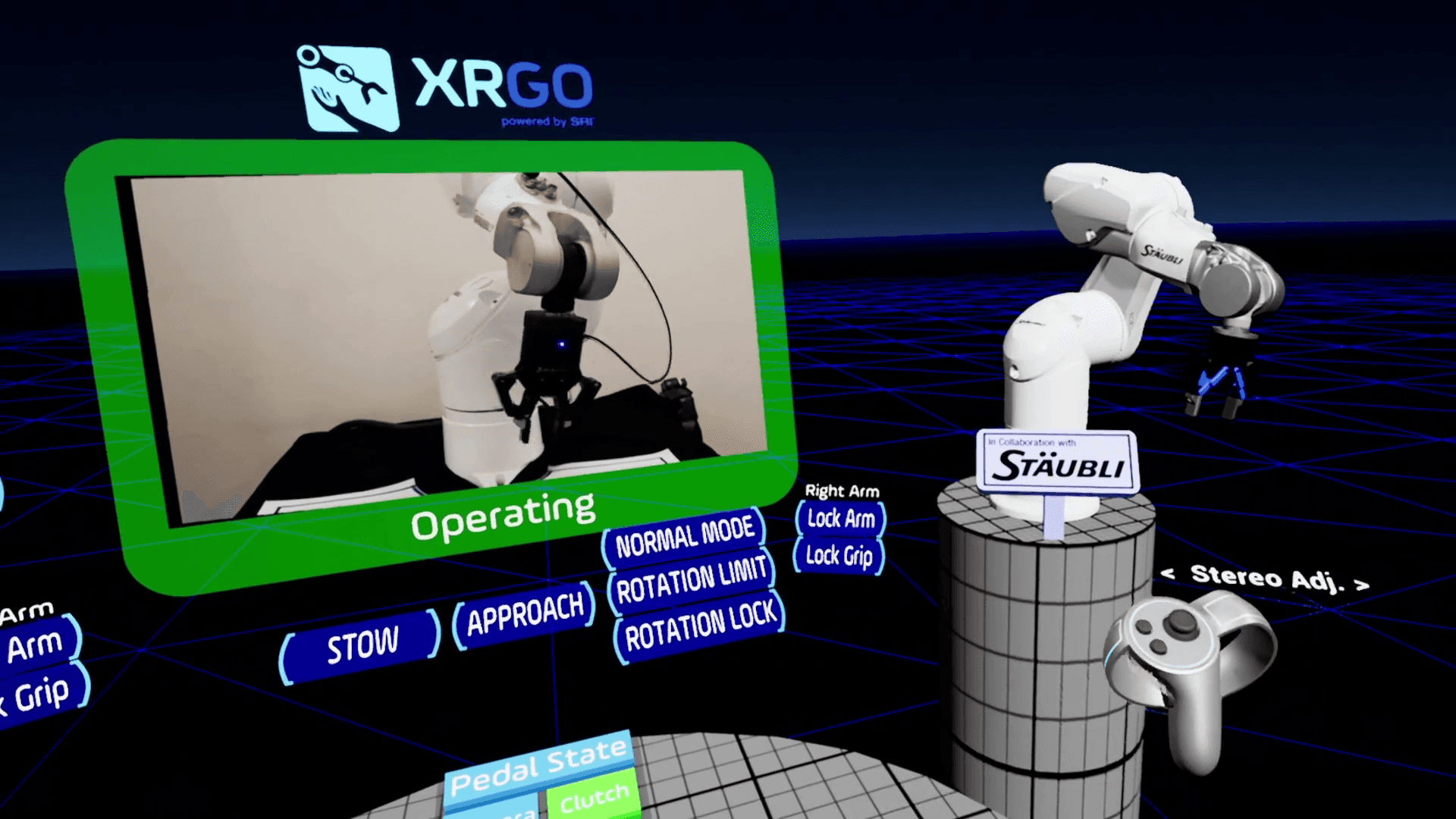

In the following video, we can see a demo of an intelligent robotic arm picking and placing items of different sizes, shapes and rigidity. This demo has been jointly developed by Avsr AI and SRI's XRGo robot telemanipulation platform; it illustrates all the steps needed to make a robot follow human instructions.

The process starts when a user sends an order to the robot in the form of a spoken command. This command is expressed in natural language to facilitate the interaction between the human and the robot. This requires the robot to understand spoken language and, therefore, the first step is to transform the input speech signal into some kind of semantic representation that the robot can use to understand the task to be carried out. Usually, it is necessary to break the task down into smaller subtasks that the robot can execute sequentially. This is known as high-level or task planning. In this demo, this plan is produced by a large language model which has been fine-tuned to generate the subtasks from an input command in natural language.

Once this plan is generated, the robot must perform the subtasks one after the other. This is achieved by running a different kind of planner, called low-level or motion planner. This planner is responsible to move the robot actuators to reach a specific place where an object can be grasped or placed. There are several techniques to build this kind of planners. Among them, we can cite Imitation learning or learning from expert demonstrations which will be described later in this post.

Finally, after the robot finishes its tasks, it is necessary to check whether the order was successfully completed. This step is crucial in a real scenario because it might require to conduct any kind of corrective action, like relying on human intervention, before the robot can keep performing more tasks. In this demo, a separate verification module is used to validate the robot successfully completed the task it was supposed to do. This model uses computer vision with robot camera; other modalities or sensor data can further enhance the accuracy of a verification module.

3. XRGo teleoperation system

[Intro paragraph - feel free to change for better flow]

XRGo [1] is a teleoperation and telepresence solution developed by robotics researchers at SRI. The software is compatible with both purpose-built full robots and industrial robot arm systems from popular manufacturers. The focus of this software is low latency and robust teleoperation; we have extended this system to support observation and control by automated methods, including AI.

During teleoperation, a human user wearing the VR headset is provided a 3D view of the robot work area. The hand controllers can be moved to control the robot end effector relative to the camera position. This is a simple and direct solution that maps the human’s action space to the robot’s action space, even if they are different. This also reduces the workload on the operator, who doesn’t have to translate different perspectives (is the robot facing towards or away from the user?) or memorize joint space and directions (will it move this direction if i increase the joint 4 value?). VR also gives us unlimited ability to add unobtrusive UI and tools. A user who has never operated a robot before is able to understand and begin completing complex tasks in under a minute.

Key breakthroughs of our system:

This proprietary teleoperation framework has benefits on the AI control side as well. With the ability to observe and capture a human’s control of the robot, a large amount of training data in the real world can be generated without interrupting a normal operation scenario. Additionally, the interfaces to sense and act are exposed programmatically, and can be handed over to a machine policy at any time.

The user-to-robot control latency is below 10 ms, which is not perceptible by a human user. A user can also configure robot motion smoothing and acceleration/velocity limits, which can add safety at the cost of perceived delay.

Finally, the system can be integrated into any robotic hardware which enables data collection across diverse environments using the available hardware.

4. Learning from demonstrations (LfD)

To train a robot to perform tasks autonomously, reinforcement Learning (RL) have been extensively used in very different environments. Although this paradigm can provide robots with a great degree of autonomy there are some drawbacks that limit their application. First, RL requires some policy to start with (usually a random policy when starting “from scratch”.) and an enormous amount of data for a policy to converge. Oftentimes, it learns from both failure and success episodes. This is not a problem in simulation but it raises serious concerns of safety and practicality in the real-world. The cost, in terms of money and time, may make unpractical to use RL models in some complex scenarios. Besides, RL requires a well-defined reward function that is measurable. In general, it is hard to find the right reward function for every task. In real life, the reward is very hard to evaluate even if it is well-defined.

Imitation learning or learning from demonstrations (LfD), on the other hand, is a powerful technique to teach dexterity and other skills to robots. This method is based on observing a human performing a task and then building a model that can emulate their skills. We can record the trajectories of an operator controlling the robot and use this data to train a model that captures the operator behavior. LfD offers several advantages over pure RL models. First, it is able to generate a reasonable policy with a very small set of demonstration data. Second, reward functions are not necessary and it does not require failure examples to learn. Besides, if demonstration data is readily available and abundant (e.g. human driving dataset for self-driving cars), LfD models can be cheap to produce. However, this is not the case for dexterous tasks in robotics and, although several datasets are available for research purposes, using specific data, collected in the same environment and conditions as the real task to be solved might be necessary to guarantee a successful deployment of an autonomous robot.

In the recent years, deep learning methods and generative AI have made it possible to produce robust policies based on LfD approaches. One example is Diffusion Policy (DP), (https://diffusion-policy.cs.columbia.edu/), based on diffusion models, originally developed in the image generation domain, which can be also applied to policy learning in the action/trajectory space. DP is a generative model, which means that it can learn the distribution of some data and then be able to generate new samples from this distribution (e.g. image generation, where the model learns how to produce new images from a real-image dataset). When applied to motion planing in robotics, the model tries to capture the probability distribution of the robot joint positions conditioned to the observations (typically, the robot current state and the images captured by the cameras)

This model works by iteratively adding noise to the samples and then learning how to remove the noise from them. This allows the model to capture the entire data distribution of the training samples instead of just learning the dominant samples in the dataset. In the case of learning robot trajectories from human demonstrations this means that the model will be able to learn multiple modes to solve a task while making sure that the model will commit to just one mode during inference.

5. Extensions and Future

There are interesting extensions to this base model. For instance, in [2], a diffusion policy for goal-conditioned Lfd approach is proposed. The basic idea is to train a versatile policy able to achieve different goals (unlike in “vanilla” diffusion policy where the model is trained on a very specific task). Also, in [3] a method is presented where preexisting planners are used to generate high-quality demonstrations that are employed to train a diffusion policy for bi-manual manipulation. There are also approaches that can learn a diffusion policy using data from a very specific task and generalize to new tasks (zero-shot learning) with high accuracy [4]. In [5] a Vision Language model is used to analyze both the task description and the image observation to generate a set of key steps that have to be followed to accomplish the task. The VLM is also used to mark the key points in the observation (image) that are relevant for the task (for instance, the grasp point for an object).

Moreover, to achieve robust robot dexterity, especially when handling objects with diverse physical properties, integrating tactile feedback into policy learning is crucial. Relying solely on visual information limits a robot's ability to discern the necessary interaction forces for grasping and manipulating items of varying rigidity and texture, as shown in [6, 7]. These work show that tactile-conditioned diffusion models can learn nuanced manipulation behaviors, like adjusting grip strength based on texture or object deformation, even when visual input is absent or occluded. This is essential for reliable and fine-grained control, especially in cluttered or visually ambiguous environments.

References

[2] Goal-Conditioned Imitation Learning using Score-based Diffusion Policies. https://arxiv.org/abs/2304.02532

[3] Planning-Guided Diffusion Policy Learning for Generalizable Contact-Rich Bimanual Manipulation.

https://arxiv.org/pdf/2412.02676

[4] Generalizable Robotic Manipulation: Object-Centric Diffusion Policy with Language Guidance https://openreview.net/forum?id=KsLzUjozIe

[5] DISCO: Language-Guided Manipulation with Diffusion Policies and Constrained Inpainting.

https://arxiv.org/pdf/2406.09767

[6] 3D-ViTac: Learning Fine-Grained Manipulation with Visuo-Tactile Sensing

https://arxiv.org/pdf/2410.24091

[7] TacDiffusion: Force-domain Diffusion Policy for Precise Tactile Manipulation https://arxiv.org/abs/2409.11047